Quick Takeaways

- ChatGPT can provide helpful guidance in low-risk situations, such as brainstorming, organizing thoughts, or drafting messages.

- It doesn’t truly understand your values, emotions, or personal history, and its advice is based on pattern prediction—not lived experience or judgment.

- ChatGPT’s advice can fall short in emotional, ethical, or high-stakes decisions, where nuance, accountability, and human insight matter.

- AI can be a starting point, but it should not be a substitute for human support, especially when it comes to mental health concerns or major life decisions.

These days, it’s common to turn to ChatGPT for advice on everything from relationships and career to exploring your mental health, handling tough conversations, or making basic life decisions. Whether you’ve done it yourself or you know someone else who has, it’s natural to wonder: Does ChatGPT give good advice?

When you want answers, talking to ChatGPT about your problems can seem natural. AI is fast, free, and doesn’t force you to be vulnerable. If you’ve found yourself confiding in ChatGPT about your problems, though, it’s important to understand when it offers useful advice, where it falls short, and how to use it responsibly to help you make decisions.

How ChatGPT Actually Generates Advice

If you’ve used ChatGPT for advice, it’s important to understand how it works.

ChatGPT is a Large Language Model (LLM)—technology that’s trained by massive datasets to identify patterns in language, words, sentences, and ideas. Instead of thinking through situations (like a friend or therapist would), AI predicts what it thinks will come next. It shares the “good advice” you would likely hear in a similar conversation. The problem is that its responses are based on millions of pieces of data, not a lived experience, a practical understanding of human behavior, or genuine insight.

In short, AI can’t offer advice that reflects your personal history, values, or emotions. Instead, it relies on the statistical patterns it finds in data and research and can't account for nuance. It doesn’t really know or understand you, despite sometimes saying that it “gets you” or attempting to personalize its outputs based on your prompts and replies. It also can’t grasp the possible real-world consequences or be held accountable if something goes wrong after you use its suggestions.

When ChatGPT’s Advice Can Be Helpful

Yes, ChatGPT may provide helpful guidance at times. For example, in low-risk situations where you simply need a sounding board, AI may be a suitable option.

You might use ChatGPT to:

- Brainstorm ideas

- Create a pros and cons list

- Think about problems through a new angle

- Make a list of what you should ask before making a big decision

- Write a list of prompts to use if you’re stuck when journaling

- Draft a neutral email to your boss

- Break a big project into more manageable chunks

The truth is, AI can be useful in many situations, especially when you use it as a starting point and not the final word on your next steps. However, advice from ChatGPT should be taken with a grain of salt.

Where ChatGPT’s Life Advice Falls Short

One reason it’s challenging to obtain appropriate or thoughtful guidance from AI is that it often has hidden biases. ChatGPT comes across as overly confident, seemingly wise, and caring, but it overlooks critical concerns that a human would notice. It can offer you generic responses that sound like reliable, expert advice, but there’s a gap between AI’s confidence and how reliable it actually is. This is why it’s so risky to ask ChatGPT for advice on important or high-stakes issues.

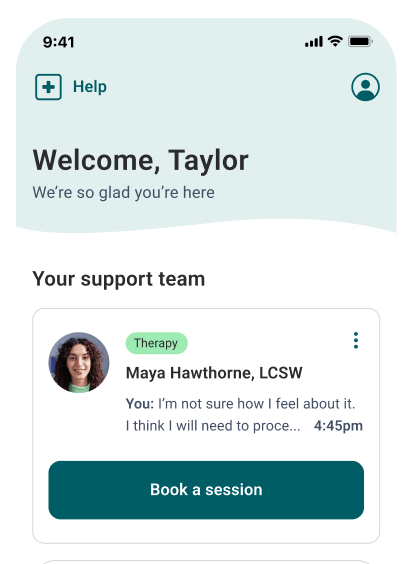

Licensed Therapists Online

Need convenient mental health support? Talkspace will match you with a licensed therapist within days.

Start therapyOf the countless decisions you’ll make in life, many will have big consequences. These are the types of decisions that rely on more than facts. They require emotional intelligence, experience, context, and accountability from an empathetic party—all things that AI just can’t provide.

Emotional validation vs. real emotional support

ChatGPT can be comforting in stressful moments by using phrases like “Your feelings are valid” or “Wow, it really makes sense that you feel this way.” Its responses can be so validating, it becomes easier to open up because you trust you won’t get confusion, judgment, or dismissal in return, as you might get from a friend, family member, partner, or even a human therapist.

There’s an important difference between the simulated empathy of AI and the authenticity a human can offer, though. You can’t build a genuine emotional connection with a computer. While artificial intelligence bots like ChatGPT may be able to mirror active listening and understanding, relying on a chatbot for emotional support might actually increase feelings of isolation, according to some research.

Emotional safety doesn’t come from hearing kind words that merely sound like someone cares—it comes from being present, engaged, and feeling seen and heard.

Moral, ethical, and values-based limitations

Anyone who’s struggled with a moral dilemma knows how hard not knowing what the “right thing” to do is. Perhaps you need to communicate a painful truth, admit you’re wrong, navigate loyalty issues, or set boundaries that are confusing and challenging. While you may be tempted to ask for advice, research indicates that ChatGPT is prone to strong bias when making moral decisions.

Cultural norms influence the data AI uses, so its answers may not always accurately reflect the complexity of a situation. If you’re hoping ChatGPT can act as your moral compass, it can’t. It’s going to give you the answer it thinks you want, not the one that reflects your values or priorities.

Life Advice vs. Mental Health Support

Asking ChatGPT for general advice isn’t as dangerous as seeking mental healthcare from it is. You may have wondered, “is AI therapy safe?” before trying it out yourself. While AI can be a great resource for learning basic skills, organizing your day, or creating a study plan, it's not the best if you need to deal with depression or anxiety or are recovering from trauma, self-harm, low self-esteem, or abuse.

“There is nothing wrong with using AI as a tool to aid in mental health symptoms, but relying on them for emotional support or life advice is harmful. AI should be considered a very fancy search engine. It accumulates the information you’re seeking and presents it to you in nice words. In this view, it misses the nuisances and particular empathy needed. Relying too much on AI or chatbots runs the risk of losing real world perspective and social nuisances to navigate the space we live in.” - Talkspace therapist Minkyung Chung, MS, LMHC

There’s a clear difference between relying on ChatGPT to help you manage a stressful week and confiding something like, “I don’t know how much more I can take.” Experts warn against using chatbots for emotional support and are clear in their stance that AI isn’t a suitable substitute for crisis support or professional mental healthcare.

According to studies, some groups are more vulnerable when asking ChatGPT for advice. For example, if you have depression or anxiety, you might be more susceptible to self-harm or suicidal ideation. Since AI can grossly underestimate the severity of your situation, its responses might delay diagnosis or treatment. It may offer misinformation or inappropriate encouragement, or validate delusional, harmful thought patterns and negative behaviors. In fact, several lawsuits have recently been filed by the families of loved ones who’ve taken their own lives after interacting with AI-powered chatbots.

Signs It’s Time to Seek Human Support

If you’re concerned that ChatGPT’s life advice isn’t helpful or you’ve wondered if you should seek further support, pay attention to the following red flags.

- Increased anxiety

- Persistent sadness

- Emptiness that doesn’t go away

- Repeatedly asking about the same topics without increased ability to cope

- Thoughts of self-harm

- Feeling hopeless

- Feeling like you “don’t want to be here” anymore

- Confusion or feeling stuck

- Feeling emotionally overwhelmed

Though ChatGPT can be a helpful tool, you might need more than what AI can offer. Turning to a therapist, friend, or family member might be exactly what you need to take a proactive step. You shouldn’t view this as a failure.

“In the beginning, chatbots are incredibly helpful in organizing and timing the coping skills they find for the person. However, it starts to become difficult when the coping skills aren’t working or the organizational skills are adjusting to the experiences the person is having. The signs and behaviors to look out for are feeling increasingly frustrated over what options are being offered by the chatbot or outright ignoring the suggestions as it makes the person uncomfortable or is not feasible within the lifestyle. It is important to recognize that asking for help beyond chatbots to a licensed professional would allow a more tailored approach with feedback that can help the person grow.” - Talkspace therapist Minkyung Chung, MS, LMHC

Final Thoughts: Should You Trust ChatGPT for Life Advice?

ChatGPT can be an excellent resource in many situations. It should not, however, be your source for meaningful life guidance. AI is part of our reality today, and it can be beneficial, as long as it’s used with caution and self-awareness.

If you need mental health support or you are facing a major life decision, there’s no substitute for an experienced, licensed, qualified mental health professional. Make the switch from leaning on AI therapy to confiding in a provider who will take the time to get to know you and steer your mental health journey in the right direction.

A note about AI: On the Talkspace blog we aim to provide trustworthy coverage of all the mental health topics people might be curious about, by delivering science-backed, clinician-reviewed information. Our articles on artificial intelligence (AI) and how this emerging technology may intersect with mental health and healthcare are designed to educate and add insights to this cultural conversation. We believe that therapy, at its core, is focused around the therapeutic connection between human therapists and our members. At Talkspace we only use ethical and responsible AI tools that are developed in partnership with our human clinicians. These tools aren’t designed to replace qualified therapists, but to enhance their ability to keep delivering high-quality care.To learn more, visit our AI-supported therapy page.

Sources:

- Kalam KT, Rahman JM, Islam MdR, Dewan SMR. ChatGPT and mental health: Friends or foes? Health Science Reports. 2024;7(2):e1912. doi:10.1002/hsr2.1912. https://pmc.ncbi.nlm.nih.gov/articles/PMC10867692/. Accessed January 10, 2026.

- Cheung V, Maier M, Lieder F. Large language models show amplified cognitive biases in moral decision-making. Proceedings of the National Academy of Sciences. 2025;122(25):e2412015122. doi:10.1073/pnas.2412015122. https://pmc.ncbi.nlm.nih.gov/articles/PMC12207438/. Accessed January 10, 2026.

- Gardner S. Experts caution against using AI chatbots for emotional support. Teachers College - Columbia University. Published December 3, 2025. https://www.tc.columbia.edu/articles/2025/december/experts-caution-against-using-ai-chatbots-for-emotional-support/. Accessed January 10, 2026.

- Social Media Victims Law Center PLLC. SMVLC files 7 lawsuits accusing Chat GPT of emotional manipulation, acting as “Suicide coach” - Social Media Victims Law Center. Social Media Victims Law Center. Published November 6, 2026. https://socialmediavictims.org/press-releases/smvlc-tech-justice-law-project-lawsuits-accuse-chatgpt-of-emotional-manipulation-supercharging-ai-delusions-and-acting-as-a-suicide-coach/. Accessed January 10, 2026.

Talkspace articles are written by experienced mental health-wellness contributors; they are grounded in scientific research and evidence-based practices. Articles are extensively reviewed by our team of clinical experts (therapists and psychiatrists of various specialties) to ensure content is accurate and on par with current industry standards.

Our goal at Talkspace is to provide the most up-to-date, valuable, and objective information on mental health-related topics in order to help readers make informed decisions. Articles contain trusted third-party sources that are either directly linked to in the text or listed at the bottom to take readers directly to the source.