Quick Takeaways

- AI therapy tools are not replacements for human therapists, especially in crisis or high-risk mental health situations.

- The primary safety concerns include a lack of clinical judgment, misinformation, emotional overreliance, bias, and privacy concerns.

- AI may support low-risk wellness activities, like mood tracking or coping exercises, but cannot diagnose, treat, or manage crises.

- Licensed human oversight is essential to ensure safety, accountability, and appropriate care.

Artificial intelligence (AI) has changed how we access much of our world today, including healthcare. From virtual physician visits to mental health and wellness appointments, chatbots and generative AI tools are being integrated into apps and platforms, promising ease and convenience.

AI in mental health care is convenient and accessible, creating comfortable, judgment-free environments for users. Despite these potential benefits, it’s still natural to wonder if AI therapy is safe. Whether you’re struggling to find an in-person therapist or you feel nervous about being vulnerable in traditional therapy settings, it’s important to understand the risks related to AI and therapy.

Read on to learn about safety issues that come with AI, what the technology can (and cannot) offer you, and how to know if AI therapy is safe for your needs.

Core Safety Concerns with AI Therapy

Mental health is a high-risk area when it comes to AI usage. The stakes are personal, and you’re using it for more than summarizing an article, organizing a to-do list, or brainstorming ideas.

AI can misread emotional states and misconstrue urgent needs during a mental health crisis, including suicidal ideation, thoughts of self-harm, past abuse or trauma, and other signs that suggest it’s time for professional intervention. This is more than just an inconvenience—AI can be dangerous in certain situations.

There are several core issues to explore when it comes to the safety of AI therapy:

- Lack of clinical judgment: AI is trained to mimic therapeutic language, but it lacks the lived experience, clinical training, and intuition that a human therapist possesses. It may not be able to fully grasp a current situation or needs. This is because AI predicts the responses it expects from patterns it learns in the datasets that train it. It can’t weigh factors such as mental and physical health history, past experiences, risk level, or the full context of what you’re going through.

- No accountability: If a licensed mental health professional gives you harmful guidance, they can be legally and ethically responsible for the outcomes. AI systems, though, lack accountability and aren’t guided by legal or ethical standards. Today, there are families involved in lawsuits against AI companies because their loved ones harmed themselves after engaging with AI therapy chatbots.

- Limited oversight and testing: Although some research suggests AI-based tools might reduce symptoms of depression or anxiety short term, most studies to date have been small to moderate in size. Many researchers acknowledge, more comprehensive studies should be conducted to increase our understanding of AI’s efficacy.

“Mental health has historically evolved from an area limited to psychology, that now encompasses a more holistic approach including more of the whole person in their social environment. AI simply cannot connect the many facets of the individual experience with a matter of professional and clinical perspective in a therapeutic way. If only dependent on data and metrics versus the human experience, clinical value and judgment from a trained human professional would be impossible to duplicate. The danger therein lies if a person has vulnerabilities, including social risk factors or even lack of natural support, which could compromise decision making or even safety in some way.” - Talkspace therapist Elizabeth Keohan, LCSW-C

Risk of misinformation and inappropriate guidance

AI is trained to be fluent, well-researched, and confident, but it’s not always correct. Recent studies highlight two major risks associated with current AI models—biased output and the phenomenon known as “AI hallucinations” or “ChatGPT hallucinations.” AI chatbots have been known to present convincing, well-explained responses that are entirely fabricated and independent of the user’s input.

AI might offer an empathetic and caring response that sounds authoritative and reasonable, but you’re not necessarily getting complete or correct information. Information can be misleading or flat-out wrong. This presents a vulnerability that’s especially risky if you’re in a crisis situation. When you’re desperate for answers or don't have access to care in a traditional setting, even subtle misguidance can increase distress and unhealthy or negative thought patterns.

Examples of misinformation or inappropriate guidance AI chatbots might offer include:

- Distorting beliefs

- Encouraging unsafe behavior

- Failing to redirect you to crisis resources

- Not suggesting you seek human help

- Minimizing risk

- Reinforcing self-blame

- Not using a concrete safety plan

- Offering generic coping tips in response to a serious crisis or trauma

- Normalizing or downplaying intrusive thoughts or paranoid fears

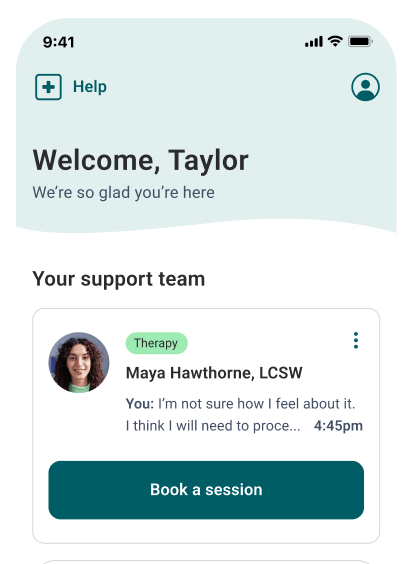

Licensed Therapists Online

Need convenient mental health support? Talkspace will match you with a licensed therapist within days.

Start therapyEmotional dependency and over-reliance on AI

One reason you might turn to AI for mental health support is that it feels safe. It can be affirming, and it’s always available. You feel like it’s okay to be vulnerable and open, and you never have to worry about being “too much.” You also don’t have to wait for appointments or stress about making a real mental health provider uncomfortable with your story.

There are also concerns if you’re experiencing loneliness. One study found that being lonely increases the use of AI bots and often makes you feel as if you’ve found a good friend. The findings suggest that some people who are lonely tend to spend longer sessions with AI chatbots, yet their loneliness increases over time, and they can have worse psychosocial outcomes.

Examples of emotional dependency and over-reliance on AI for mental and behavioral health support include:

- You start having longer, more intimate conversations with AI

- You feel empty after engaging

- You turn to chatbots for help before a friend, partner, or health professional

- You’re increasingly more comfortable confiding in the app

- You feel like the chatbot “knows you”

- You’ve become emotionally reliant on the technology

- It’s your main source of comfort

- It prevents you from reaching out for professional support

Bias, data gaps, and equity concerns

Another concern with AI therapy includes what tools “see” and who they might overlook. AI is trained by datasets that often underrepresent or misrepresent the experiences of certain groups. This can be problematic if training data doesn’t reflect your reality and you’re dealing with a mental health system that offers lower-quality or less culturally responsive care.

By not taking into account factors such as culture, language, gender identity, disabilities, or past experience with racism or oppression, AI can’t fully understand or respond to your needs. For example, you might tell AI that you’re struggling with discrimination in the workplace. Without proper training or evaluation for bias, AI might want to convince you that the problem is something you’ve created, rather than help you recognize the systemic harm you’re facing.

Some common examples of bias, data gaps, and equity concerns of AI include:

- Misinterpreting symptoms

- Not understanding your distress signals

- Offering advice that doesn’t consider structural barriers or discrimination

- Reinforcing stereotypes

- Failing to recognize systemic harm

Privacy, data security, and ethical risks

To be open about your mental health challenges, you’re likely sharing sensitive information. Privacy, consent, how information is stored, and data protection are essential to our healthcare system—they help ensure that private information remains confidential and private between you and your provider.

Licensed mental health professionals are trained in and bound by ethical and legal requirements regarding privacy. Research shows that many mental health apps (and some AI chatbots) monetize sensitive user data and analytics for advertising and marketing purposes. You cannot assume your conversations with an AI tool are confidential. It’s critical to fully read and understand an app or platform’s privacy policy. Seek transparency, consent, and data protection.

Some of the privacy, data security, and ethical risks of AI therapy may include:

- Targeted ads based on your mental health struggles

- Lack of transparency

- Limited or no data protection protocols

- Sharing information with outside companies, even if privacy policies are in place

- Storing your personal data and mental health information in ways that could impact you in cases of breach or misuse

- Reserving the right to share sensitive information about your data or mood with third parties

What AI Can Support — and What It Cannot Replace

Despite its risks, AI isn’t always a bad thing. When used with limits, it can be a beneficial addition to mental wellness plans. For example, while it shouldn’t be used for diagnosis, treatment planning, long-term trauma recovery, or crisis intervention, there are ways AI therapy tools can help you address mental health challenges.

Some helpful ways AI can support and improve your mental health include:

- Wellness check-ins and mood tracking

- Suggesting skills practice

- Offering coping tools

- Providing psychoeducation and sharing high-level information about mental health conditions, coping skills, and treatment options to discuss with your doctor or therapist

- Sharing journal and mindfulness prompts

The Importance of Human Oversight in Mental Healthcare

The era of AI in therapy may be beneficial for some people, but will AI replace therapists anytime soon? The answer is likely no. Licensed clinicians are trained and experienced in delivering safe and effective mental health treatment. The essence of mental healthcare is establishing a trusting, healthy relationship with someone who understands your story, recognizes your patterns, and helps you respond to changes in risk level. Mental health professionals offer more than techniques. They can also provide healthy empathy, ethical accountability, and guidance on coordinating additional care when necessary.

Humans are essential to mental healthcare because mental health professionals:

- Are trained to identify red flags or risk factors

- Can help you take appropriate steps if you need crisis planning or next levels of care

- Recognize when symptoms are related to medical conditions, substance misuse, or other dangers that AI can’t detect

- Are bound by ethical and legal frameworks that offer accountability

- Cannot be substituted when professional, clinical judgment is required

“Safety is a priority in all cases. AI can allow someone a matter of space and privacy, to practice skills - from coping to communication, but AI can also inadvertently reinforce negative behavior without any discernment from a safety perspective. It may be a good idea to limit usage if only to either complement existing human therapeutic care or for coping skills practice; a predisposition for risk simply cannot be meaningfully supported and could result in unsafe outcomes.” - Talkspace therapist Elizabeth Keohan, LCSW-C

Final Thoughts: Is AI Therapy Safe?

Used cautiously, AI can offer limited mental health support, but it can’t replace a licensed, trained therapist. You should always prioritize evidence-based care, human connection, and professional oversight that can consider your needs and history.

Talkspace is built with human clinicians and technology to increase access, affordability, and flexibility. Responsible, technology-enabled mental healthcare can combine accessibility with client safety, professional oversight, and accountability. Learn more about what AI-supported tools looks like at Talkspace.

A note about AI: On the Talkspace blog we aim to provide trustworthy coverage of all the mental health topics people might be curious about, by delivering science-backed, clinician-reviewed information. Our articles on artificial intelligence (AI) and how this emerging technology may intersect with mental health and healthcare are designed to educate and add insights to this cultural conversation. We believe that therapy, at its core, is focused around the therapeutic connection between human therapists and our members. At Talkspace we only use ethical and responsible AI tools that are developed in partnership with our human clinicians. These tools aren’t designed to replace qualified therapists, but to enhance their ability to keep delivering high-quality care.To learn more, visit our AI-supported therapy page.

Sources:

- Heinz MV, Mackin DM, Trudeau BM, et al. Randomized trial of a generative AI chatbot for mental health treatment. NEJM AI. 2025;2(4). doi:10.1056/aioa2400802. https://ai.nejm.org/doi/full/10.1056/AIoa2400802. Accessed January 6, 2025.

- Kabrel N. When can AI psychotherapy be considered comparable to human psychotherapy? Exploring the criteria. Frontiers in Psychiatry. 2025;16:1674104. doi:10.3389/fpsyt.2025.1674104. https://pmc.ncbi.nlm.nih.gov/articles/PMC12504474/. Accessed January 6, 2025.

- Özer M. IS ARTIFICIAL INTELLIGENCE HALLUCINATING? Turkish Journal of Psychiatry. Published online January 1, 2024. doi:10.5080/u27587. https://pmc.ncbi.nlm.nih.gov/articles/PMC11681264/. Accessed January 6, 2025.

- Fang C, Liu A, Danry V, Lee E, Chan S. How AI and human behaviors shape psychosocial effects of extended chatbot use: a longitudinal randomized controlled study. ARXIV. Published online October 2, 2025. https://arxiv.org/pdf/2503.17473. Accessed January 6, 2025.

- Huckvale K, Torous J, Larsen ME. Assessment of the data sharing and privacy practices of smartphone apps for depression and smoking cessation. JAMA Network Open. 2019;2(4):e192542. doi:10.1001/jamanetworkopen.2019.2542. https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2730782. Accessed January 6, 2025.

Talkspace articles are written by experienced mental health-wellness contributors; they are grounded in scientific research and evidence-based practices. Articles are extensively reviewed by our team of clinical experts (therapists and psychiatrists of various specialties) to ensure content is accurate and on par with current industry standards.

Our goal at Talkspace is to provide the most up-to-date, valuable, and objective information on mental health-related topics in order to help readers make informed decisions. Articles contain trusted third-party sources that are either directly linked to in the text or listed at the bottom to take readers directly to the source.