Key Takeaways

- AI has potential to improve access and efficiency in mental health care, but it cannot replace human empathy or clinical judgment.

- Used responsibly, AI helps reduce administrative burden, identify patterns, and scale support while licensed providers remain accountable for treatment decisions.

- Protecting privacy, preventing bias, ensuring transparency, and maintaining human oversight allow AI to strengthen mental health care without eroding safety or trust.

Can a machine understand your deepest fears? Millions are betting yes. Mental health apps powered by AI are everywhere now, offering instant therapy and crisis support at hours when no human therapist is available.

AI for health and wellbeing promises to change psychological care in meaningful ways, making help easier to access. But there are many things it can't do: feel what you're feeling, pick up on what you're not saying, or bring the human judgment that makes therapy work. AI augments human care, but doesn't replace it.

AI for mental health holds some promise for early detection of mental health challenges, increased accessibility for mental health support, and reaching populations who might never seek traditional therapy. But the risk is that companies will fast-track products with safety and ethical shortcomings. Anyone exploring mental health-focused AI tools should understand both what they can deliver and where they're likely to disappoint.

What is Artificial Intelligence in Mental Health?

Artificial intelligence (AI) refers to computer systems that learn from data to recognize patterns and make predictions. In mental health care, it analyzes large volumes of psychological and behavioral data to support clinical decision-making and care delivery.

Most mental health AI tools rely on two core technologies:

- Machine learning (ML): ML identifies patterns across large datasets and improves over time. In mental health settings, this can include analyzing symptom histories or behavioral trends to help predict risks such as symptom worsening or potential mental health crises.

- Natural language processing (NLP): NLP enables computers to analyze written or spoken language. In mental health care, this can include examining speech or text patterns for changes linked to mood, stress, or emotional regulation, and summarizing therapy notes for clinical use.

When used responsibly, these technologies can help surface insights from data, such as language patterns or self-reported symptoms. However, they cannot understand personal context, intent, or emotional nuance like a human therapist can, which is why clinical oversight remains essential.

Current Applications: How AI Supports Mental Health Care

AI often operates in the background, supporting clinicians and systems rather than functioning as a stand-alone solution.

Here’s where it has the most impact today:

Predictive analytics and early detection

AI can spot patterns across large datasets that humans can’t easily review at scale. By analyzing electronic health records (EHRs) and behavioral data, machine learning tools can flag early warning signs of depression, anxiety, and other mental health concerns.

This early detection allows clinicians to intervene sooner, potentially preventing crises before they escalate. In hospital settings, these systems are already helping identify patients who might benefit from immediate mental health support.

AI for accessibility: Conversational agents and chatbots

AI-powered conversational tools are often the most visible application of mental health technology. These tools, sometimes described as artificial intelligence therapy, are typically designed for accessibility and support, rather than for diagnosis or treatment.

Chatbots and conversational agents can help with:

- Initial triage and symptom check-ins

- Psychoeducation and coping skill reminders

- Basic mood tracking and self-reflection prompts

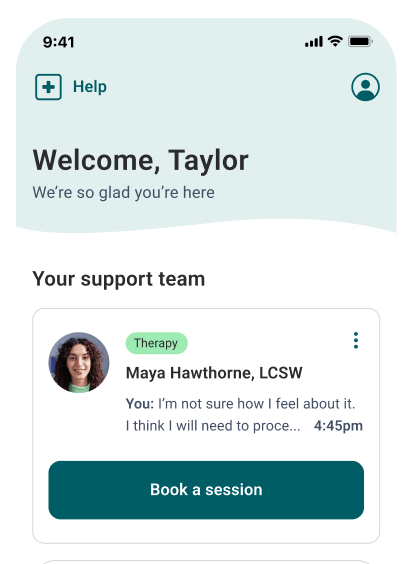

Some mental health care providers, such as Talkspace, use AI-driven tools to support different parts of the care process, including therapist notetaking and between-session engagement.

Administrative efficiency and clinical documentation

AI also plays a critical role in reducing the administrative burden that contributes to clinician burnout. Tasks such as documentation, scheduling, and billing take significant time away from direct care.

AI-assisted tools can:

- Summarize sessions and draft clinical notes

- Automate appointment reminders and scheduling workflows

- Help streamline billing and insurance processes

By handling repetitive, time-intensive tasks, AI allows clinicians to spend more time on care and less time on paperwork.

Personalized intervention recommendations

Machine learning can support more personalized care across large populations. By analyzing patterns in de-identified data (information stripped of personal details such as names or contact information), AI therapy systems can suggest treatment approaches that have been effective for people with similar symptoms or care histories.

These insights can help clinicians:

- Identify therapy approaches that tend to work well for certain symptom patterns

- Notice when engagement trends suggest a care plan may need adjustment

- Bring data-informed context into clinical decision-making

Clinicians use these recommendations as part of their broader decision-making, informed by their expertise and their understanding of the person they are supporting. Together, these applications show how AI can enhance mental health care by supporting systems and the people who rely on them.

Benefits for Patients, Clinicians, and Systems

When used thoughtfully, AI can strengthen mental health care across the care experience. It helps reduce strain on systems while making it easier for people to consistently access support.

Within the broader conversation about AI for health and wellbeing, mental health care stands out as an area where human oversight remains essential.

Addressing access barriers

Access is a major challenge in mental health care, with many people facing long wait times or limited provider availability. AI helps virtual therapy platforms reach more people by enabling tools such as automated intake, symptom tracking, and care matching.

These systems can connect individuals to available providers regardless of location and help manage high demand without overwhelming clinical teams. In clinical settings, AI is primarily used to help people access appropriate support more quickly and efficiently.

Objective data collection to reduce human bias

Human judgment is essential in mental health care, but it is not immune to bias or inconsistency. AI systems can help by collecting and analyzing data more consistently.

By examining patterns across large datasets, AI can surface trends that might otherwise be missed during intake or early triage. For example, structured symptom reporting or language analysis can help identify potential risk signals consistently across populations.

Licensed Therapists Online

Need convenient mental health support? Talkspace will match you with a licensed therapist within days.

Start therapyWhen combined with clinical oversight, this objective data can support fairer, more informed decision-making without replacing professional judgment.

Reducing clinician burnout through administrative relief

Burnout among mental health providers is closely tied to administrative overload. Documentation, scheduling, and billing demands often compete with time spent on care.

AI-assisted tools can ease this burden by automating routine tasks such as drafting notes and managing appointments. When clinicians spend less time on paperwork, they can devote more attention to their members and their own well-being.

Risks, Limitations, and Ethical Red Flags

While AI offers meaningful support in mental health care, its limitations matter just as much as its potential. Understanding where AI falls short is essential for using it responsibly.

Limits of empathy and understanding

AI can recognize patterns in language and behavior, but it does not grasp lived human experience. It cannot feel empathy or respond intuitively to emotional nuance. These qualities form the foundation of the therapeutic alliance.

According to a 2018 study, Therapist empathy and client outcome: An updated meta-analysis, empathy is a moderately strong predictor of therapy outcome.

Human therapists bring an understanding of context and relationships that AI cannot replicate. This becomes especially important during vulnerable or emotionally complex moments, when how something is said and when it’s said can matter as much as the words themselves.

Artificial intelligence can provide general advice, direction or guidance based on the "facts" that it is given. However, oftentimes when people come to therapy, they do so hoping to be able to connect with someone who has either been through a similar situation to what they are experiencing, or someone who has experience helping people in any given situation or diagnosis. Artificial intelligence does not have real world experience that people are often looking for.

- Talkspace Therapist, Henry Jay Swedlaw, LPC, LMHC

Data privacy, security, and confidentiality concerns (HIPAA implications)

Mental health data is deeply personal, and using AI adds new privacy and security considerations. These systems handle sensitive information; therefore, strong safeguards for data collection, storage, and sharing are essential.

In clinical settings, tools must comply with laws such as HIPAA to reduce the risk of misuse or breaches. Transparency about data practices and clear, informed consent are key to maintaining trust.

Algorithmic bias and health disparities

AI systems are only as good as the data they are trained on. When training data reflects existing social, racial, gender, or economic biases, those biases can be reinforced rather than corrected.

Human therapists bring understanding and connection that AI cannot. This is especially important during emotional moments, when people need empathy, not just information.

Responsibility and liability in crisis intervention

If an AI system fails to detect signs of severe risk, the consequences can be serious. AI tools can support awareness, but they can’t make safety decisions or take responsibility for outcomes.

Accountability rests with the organizations and clinicians using these tools, which is why clear protocols for review and human intervention are essential. Responsible use means recognizing AI’s limits and ensuring human judgment remains central.

Regulations and Standards You Need to Know

As AI becomes more common in mental health care, regulation and professional standards play a critical role in protecting the quality of care. Not all AI tools are subject to the same regulations, and understanding these distinctions is key to ensuring their responsible use in clinical settings.

FDA oversight for medical devices

In the U.S., the Food and Drug Administration regulates AI tools that meet the definition of a medical device. This typically includes technologies that are used to diagnose conditions, guide treatment decisions, or make clinical claims that could affect health outcomes.

Many mental health AI tools that help therapists do not fall into this category. Apps focused on wellness, education, mood tracking, or self-reflection usually do not require FDA clearance because they are not intended to diagnose or treat mental health conditions.

In contrast, AI tools that claim to detect a mental health condition, predict clinical risk, or directly inform treatment decisions may require FDA review and approval. This distinction matters. FDA oversight helps ensure that higher-risk tools meet safety, effectiveness, and transparency standards, while lower-risk wellness tools are held to different expectations.

Clinical guidelines and professional ethics (APA and AMA)

Beyond regulatory oversight, professional ethics guide how AI should be introduced into care. Organizations such as the American Psychological Association and the American Medical Association have emphasized that technology must support ethical clinical practice.

Key ethical principles include:

- Informed consent, so individuals understand how AI tools are used and what their limits are

- Transparency around data use, storage, and privacy

- Clear clinical responsibility, with humans accountable for decisions and outcomes

- Ongoing evaluation to ensure tools do not introduce harm or bias

For therapists and psychiatry providers, ethical practice means carefully considering whether an AI tool enhances care, protects member wellbeing, and aligns with professional standards. Regulation and ethics together provide guardrails that help ensure AI strengthens mental health care rather than compromising it.

"When utilizing artificial intelligence in therapy, it is important for both provider and client to understand that limitations of AI. Therapist should explain to client the specific limitations that AI has, any risks/benefits, alternatives, as well as limits to confidentiality which would still be in place even when utilizing artificial intelligence. Therapist should also be able to explain data encryption basics if asked."

- Talkspace Therapist, Henry Jay Swedlaw, LPC, LMHC

How to Evaluate and Choose an AI Tool

Not all AI tools are developed with the same level of oversight or ethical care. Thoughtful evaluation helps protect the quality of care and maintain trust when these tools are used.

Transparency and explainability (The "black box" problem)

One of the biggest concerns with AI is explainability. Some systems generate outputs without clearly showing how they arrived at those conclusions, a problem often referred to as the "black box" problem.

When evaluating an AI tool, it should be possible to understand:

- What data the system uses

- What the tool is designed to do and what it is not designed to do

- How its outputs are meant to support human decision-making

When AI systems lack transparency, it becomes harder for clinicians to understand how outputs were generated or to spot potential errors. In mental health care, trust depends on understanding how a tool produces its results.

Evidence base and validation (Does it have clinical trials?)

Claims about AI tools should be backed by strong evidence. Responsible tools are grounded in research and, when appropriate, evaluated through clinical studies or real-world validation.

Key questions to ask:

- Does the evidence support the specific claims being made?

- Does it reflect diverse populations?

- Do tools that influence care decisions meet higher standards than general wellness apps?

Data security protocols and HIPAA compliance

Mental health data requires strong protections. Any AI tool used in care settings should clearly outline:

- How it handles data storage

- Encryption methods

- Access controls

- Data retention policies

HIPAA compliance is essential for tools that handle protected health information. Even beyond legal requirements, best practices include minimizing data collection, limiting access to sensitive information, and being transparent with members about how their data is used.

Ethical considerations in implementation

Choosing an AI tool is both a technical and ethical decision. Clinicians and organizations should consider how the tool affects autonomy, consent, and responsibility.

Important questions include:

- Are members clearly informed that AI is being used and why?

- Does the tool support, rather than replace, clinical judgment?

- Who is accountable if the tool makes an error or misses a risk?

Ethical implementation means AI supports care without taking control of decisions. When evaluation focuses on transparency, evidence, security, and ethics together, AI is far more likely to enhance mental health care without compromising the human values at its core.

Opportunity for clinician quote: When a clinician is considering integrating an AI tool into their practice, what are the most critical ethical questions they should ask themselves—particularly around informed consent, data privacy, and clinical responsibility?

Future Outlook: Where AI and Mental Wellbeing are Heading

The future of AI in mental health care centers on collaboration, with technology supporting clinicians rather than replacing them. AI can help with tasks like symptom monitoring, progress tracking, and flagging when someone may need extra support. This allows therapists to respond more efficiently while keeping professional judgment and human connection at the core.

Accessing AI-Enhanced Therapy Online

For many people seeking mental health support, the question isn't whether AI should be part of therapy, but how it can be used responsibly. When thoughtfully integrated, AI can remove barriers to access and support the therapeutic relationship rather than replace it.

Some online therapy services are using AI to support care in practical ways, pairing advanced technology with licensed therapists who remain responsible for treatment. AI is integrated into parts of the care experience to improve efficiency, access, and continuity, while human providers guide clinical decisions.

AI can be used to support behind-the-scenes functions such as therapist matching, scheduling, and documentation, enabling care teams to respond more efficiently and giving therapists more time for clinical work. For Talkspace members, AI-powered tools can support engagement between sessions and make mental health information easier to access. Experience the balance of human care and smart technology. Start your Talkspace journey today.

Frequently Asked Questions

Can AI diagnose mental health conditions?

No, AI tools are not qualified to diagnose mental health conditions. Diagnosis requires clinical training, contextual understanding, and professional judgment that only a licensed therapist or psychiatric provider can make. Some AI tools may help organize information or flag patterns, but they cannot make or confirm diagnoses.

Is AI therapy as effective as working with a human therapist?

AI is not a replacement for therapy with a human provider. While AI therapy tools can support learning, mood tracking, or self-reflection, they do not offer the empathy, responsiveness, or therapeutic relationship that drives meaningful change.

Can AI accurately detect suicide risk or mental health crises?

AI can sometimes identify patterns that may suggest elevated risk, such as changes in language or engagement. However, it is not reliable enough to manage crisis detection on its own. False positives and missed signals are both possible; therefore, AI must be paired with clear protocols and human oversight. Responsibility for safety decisions always rests with trained professionals.

What are the biggest ethical and privacy risks of using AI in mental health care?

The most significant concerns of AI and mental health include data privacy, informed consent, and misuse of sensitive information. Mental health data is deeply personal, and any AI tool handling it must follow strict security standards and clearly explain how data is collected and used. There are also ethical risks related to bias, transparency, and accountability if AI systems are not carefully evaluated and monitored.

Will AI ever replace therapists or other mental health professionals?

There is no indication that AI will replace therapists. The most realistic and responsible future may likely be a hybrid model, where AI supports clinicians by improving efficiency and access while humans provide care, judgment, and connection. At its core, mental health treatment depends on trust, empathy, and ethical responsibility. These are strengths only humans can provide.

Sources

- Elliott, R, Bohart, AC, Watson, JC, Murphy, D. Therapist empathy and client outcome: An updated meta-analysis. Psychotherapy. https://doi.org/10.1037/pst0000175. 2018 Dec; 55(4):399-410.

Talkspace articles are written by experienced mental health-wellness contributors; they are grounded in scientific research and evidence-based practices. Articles are extensively reviewed by our team of clinical experts (therapists and psychiatrists of various specialties) to ensure content is accurate and on par with current industry standards.

Our goal at Talkspace is to provide the most up-to-date, valuable, and objective information on mental health-related topics in order to help readers make informed decisions.

Articles contain trusted third-party sources that are either directly linked to in the text or listed at the bottom to take readers directly to the source.