This post, Donate Your Health Care Data Today, was originally published as an opinion piece in The New York Times’ ‘The Privacy Project’ on October 2nd, 2019.

If you’re reading this, you’ve probably become increasingly concerned about your data, and for good reason: It seems that every day, we wake up to news about a new data breach or privacy violation, encouraging collective paranoia to travel widely and well.

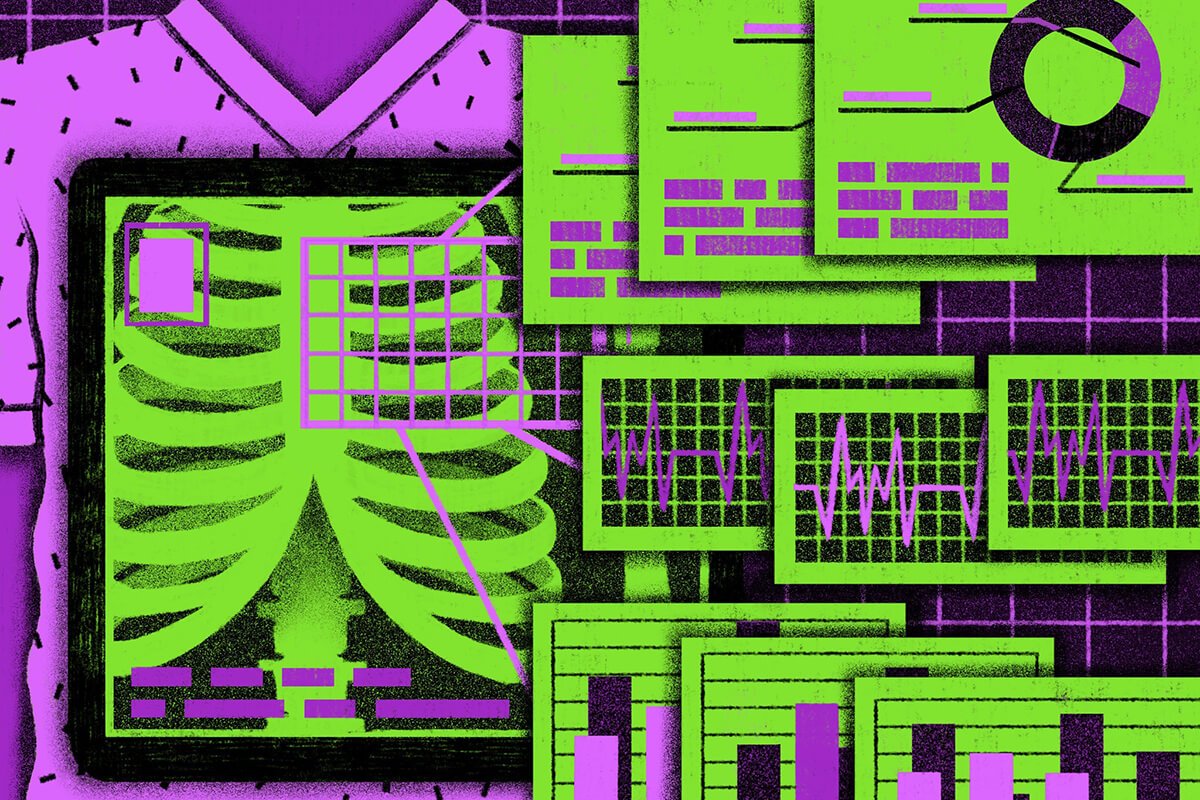

This fear is perhaps most justified when it comes to matters as intimate as our health — there is something haunting about the image of an attacker with unauthorized access to our treatment records, medication protocol and comprehensive electronic health records. On the other hand, should we really be so worried that people will find out about our history of arrhythmia or the results of a recent blood test? In reality, it is not the existence of this data that’s dangerous but the intent of the agents who can obtain it and what they choose to use it for.

But I think it’s time to stop and consider how we might reframe and rethink our cultural narrative around privacy, particularly the critical role health care data could play in medical innovation. Aggregated health care data has the potential to be a public good, part of a collective effort to develop new medical treatments, improve clinical outcomes across medical fields and save lives.

Our current “health care data” includes wide profiling

A lot has changed, technologically speaking, since 1996 — even since 2009, when Congress passed the Health Information Technology for Economic and Clinical Health Act, which aimed to incentivize providers and patients to adopt the use of technology and electronic medical records. Thanks to improvements in data storage and computational technologies, medical advancements no longer rely simply on individual human learning processes — testing hypotheses in real time, tracking outcomes of limited data sets, developing theories based on patterns over time.

Licensed Therapists Online

Need convenient mental health support? Talkspace will match you with a licensed therapist within days.

Start therapyWith enormous amounts of patient health data being collected and digitized each day, the other piece of the puzzle comes into focus. If aggregated, our anonymized health records could become part of a large-scale data set to improve the diagnosis and treatment of illnesses across all medical fields using machine learning algorithms. The more anonymous data we collect — demographic and medical — the better we can identify causes, diagnose early and develop better treatments. In the process, we can draw connections between previously disconnected data sets — diagnoses and geography, medication protocol and lifestyle, treatment success and medical history, and plenty more.

To do this successfully and at scale, we need data. All of our data. Mine and yours.

Machine learning was recently shown to detect early lung cancer more accurately than human radiologists. In May 2019, Google and Northwestern Medicine teamed up to apply a deep-learning algorithm to 42,290 patient CT scans to predict one’s likelihood of lung cancer. Because the images are difficult to read, Google and Northwestern’s study developed a machine-learning model to read them, then compared the results with those of six experienced radiologists. According to the study, the machine-learning model was able to detect cancer 5 percent more often than the radiologists and was 11 percent more likely to decrease false positives.

This is just one example, but it emphasizes the need for large-scale pattern recognition in creating predictive diagnostic models. The human brain can develop the deep-learning algorithms necessary for this kind of innovation, but only the algorithms can effectively recognize patterns at such a large and impactful scale.

Some may claim that the potential damage from a health care company data breach is far more complex than the damage from other forms of data warfare — and they are correct. Victims cannot simply change their passwords or cancel their credit cards to resolve the risks of identity theft, fraud, risk profiling, targeted psychographics, increased insurance premiums and other dangerous (and expensive) consequences.

Regardless, digital health care data will continue to be collected every day, providing tremendous opportunities for medical research and treatment, as well as the inevitable potential for danger that exists in all walks of digital life. Why not go ahead and put this information in the hands of the right agents, and establish strict regulation and enforcement protocols in the process?

With the support and intervention of regulatory bodies, there would need to be an extensive de-identification process to irreversibly anonymize our personal data. These bodies would also need to prohibit the monetization of health care data and prevent it from being used for profiling or any other unethical or criminal purpose. A zero-tolerance policy for foul use of our data will probably yield better results than another cybercrime consultant or better computer servers.

The vast amount of information each of us possesses is far too important to be left under the control of just a few entities — private or public. We can think of our health care data as a contribution to the public good and equalize its availability to scientists and researchers across disciplines, like open source code. From there, imagine better predictive models that will in turn allow better and earlier diagnoses, and eventually better treatments.

Your health care data could help people who are, at least in some medical aspects, very similar to you. It might even save their lives. The right thing to do with your data is not to guard it, but to share it.

Image Credit: Claire Merchlinsky via The New York Times

Talkspace articles are written by experienced mental health-wellness contributors; they are grounded in scientific research and evidence-based practices. Articles are extensively reviewed by our team of clinical experts (therapists and psychiatrists of various specialties) to ensure content is accurate and on par with current industry standards.

Our goal at Talkspace is to provide the most up-to-date, valuable, and objective information on mental health-related topics in order to help readers make informed decisions.

Articles contain trusted third-party sources that are either directly linked to in the text or listed at the bottom to take readers directly to the source.