Key Takeaways

- Decision paralysis using AI is rooted in well-documented choice overload and anxiety-linked cognitive patterns that make high-stakes decisions harder under digital pressure.

- Structured frameworks reduce indecision by replacing open-ended evaluation with specific, repeatable criteria and time-bounded commitments.

- Persistent paralysis may signal an underlying anxiety-related mental health challenge; evidence-based therapy can improve both decision-making and overall functioning.

Access to more AI tools should mean we're able to make faster decisions, but for a lot of people, it means the opposite. This freeze is decision paralysis in action, and it adds up. The problem runs deeper than tool overload.

Human cognition was never designed for the volume of options that modern AI environments generate. With a growing number of working-age adults using generative AI, the volume of competing recommendations, conflicting outputs, and overlapping platforms people manage daily continues to rise.

Understanding what's actually happening in your brain when the options stack up is the first step toward getting unstuck.

What is Decision Paralysis in the Age of AI?

Decision paralysis using AI refers to the situation in which the growing number of tools, data points, and AI-generated recommendations becomes so overwhelming that choosing any one path feels impossible. The result is not a bad decision, but rather no decision at all.

The pattern connects to a well-documented concept called choice overload. Psychologist Barry Schwartz argued that beyond a certain number of options, more choices can increase anxiety and push people toward avoidance rather than action.

According to the Harvard Kennedy School Project on Workforce, 54.6% of US adults aged 18–64 had adopted generative AI by August 2025, an increase of 10% from the year before.

Each new adopter steps into an environment that offers hundreds of overlapping tools and few clear benchmarks for choosing between them.

To understand AI mental health dynamics in the workplace, learn about the psychological mechanics behind indecision so you can work toward solutions.

Why Does AI Amplify Our Indecision?

Several forces can lead to decision paralysis using AI. According to the International Journal of Environmental Research and Public Health, a 2019 exploratory study of more than 1,000 upper-level managers found that higher perceived choice overload from digital tools and greater pressure from digitalization were both linked to lower psychological well-being. The research predates the generative AI wave, which means today's tool sprawl likely adds even more strain on top of an already documented problem.

What makes this particularly difficult is that AI is designed to generate options, not close them. Without a clear process for when analysis ends and action begins, more data reliably produces more delay. The drivers behind that delay:

Developing coping skills for anxiety is one place to start when the psychological weight of these pressures becomes more than a workflow problem.

“AI generates too much for not enough space. Humans are simply not equipped for the burden of overload at the pace of which we receive it. It's important to limit and narrow the search while considering well enough versus perfection as an acceptable option. Otherwise, oversaturation increases overload fatigue while delaying discernable results."

- Talkspace Therapist, Elizabeth Keohan, LCSW-C

How Can You Quickly Diagnose AI-Driven Decision Paralysis?

Spotting decision paralysis using AI in yourself or your team is the first real move. People with anxiety disorders tend to think more about negative outcomes, which produces slower decisions, avoidant behavior, and difficulty updating choices after setbacks.

These same patterns surface in non-clinical forms when the AI pressure runs high.

Run this 5-question self-check:

- Have you delayed an AI-tool decision for more than two weeks without a clear reason?

- Are you collecting more data without feeling any closer to a choice?

- Does a new AI tool proposal trigger dread rather than curiosity?

- Has your team had the same "which tool should we use" conversation more than 3 times?

- Are you regularly second-guessing decisions you've already made and implemented?

Protect your mental health at work

Get support for your mental health in your career with online therapy from Talkspace.

Get startedScoring your results:

- 0–1 yes answers (Green): Normal evaluation mode. Keep moving.

- 2–3 yes answers (Yellow): A structured framework is needed. Pick one (below) and test it this week.

- 4–5 yes answers (Red): Active decision avoidance is at work. Structural and potentially psychological support is warranted.

Tool-sprawl audit: Three questions to ask your current stack:

- Which tools duplicate a function that another platform already handles?

- Which tools haven't been used in the past 30 days?

- Which tools have no measurable success criteria attached to them?

Tracking your internal reactions through a journal can reveal patterns in how you respond to ambiguity, separate from the tools themselves.

Which Frameworks Break Analysis Paralysis and Drive Action?

Structured decision frameworks do what open-ended deliberation can't: they impose a time limit on evaluation, define what "good enough" looks like, and force a conclusion. With AI surfacing new data points continuously, that structure keeps evaluation from becoming a permanent state. Here are four models that can help:

1. OODA loop for real-time AI choices

The OODA model moves through four phases: Observe, Orient, Decide, Act. It was designed for environments where conditions shift faster than traditional planning allows.

AI accelerates the Observe and Orient phases by aggregating data rapidly. The bottleneck is rarely information. It's the Decide step.

Using this cycle for AI choices means setting a hard time limit on the Orient phase, deciding with the best available information at that moment, and circling back to evaluate after execution. A SaaS product team, for instance, might cap their AI tool evaluation at 5 days before committing to a 30-day pilot.

2. Prioritization matrix for AI tool selection

A 2-axis matrix plotting impact versus effort gives every tool under evaluation a visual position before discussion begins. Place high-impact, low-effort tools in the upper-left quadrant. Start there.

Rating two tools as an example: Tool A scores high impact and low effort because it replaces a manual reporting task. Tool B scores high effort and uncertain impact because it requires 3 months of training with unverified outputs. Tool A goes first. Tool B gets re-evaluated in quarter 2.

3. Trust Insights 5P + RACE hybrid prompt

The 5P structured prompting approach builds clarity into every AI request before you send it. The 5 components are Purpose, People, Process, Platform, and Performance.

Layering on RACE (Role, Action, Context, Execute) produces a prompt with explicit constraints, a defined use case, and a measurable output goal.

An example prompt: "You are a B2B content strategist [Role] writing a 200-word executive summary [Action] for a SaaS product launch targeting mid-market HR leaders [Context]. Use formal tone, no bullet points, optimize for comprehension by a non-technical reader [Execute and Performance]." That level of constraint reduces revision cycles and the second-guessing that follows vague AI output.

4. Pick–Act–Learn cycle for rapid iteration

Under this model, the three steps are: Pick one option from your shortlist, Act on it for a defined trial period, and Learn from the outcome using preset metrics before deciding whether to continue.

A content team evaluating 4 AI writing tools could commit to 1 tool for two weeks, track output quality and time saved, and then decide whether to expand use, switch tools, or combine approaches. The feedback loop replaces the regret of an irreversible wrong choice with recoverable data from a bounded experiment.

What are the First Steps You Should Take Today?

The path forward is clearer than it may feel right now. Start by running the 5-question self-check above to see where your paralysis sits on the green-yellow-red scale. That single step takes under five minutes and tells you whether a framework alone is enough or whether something deeper deserves attention.

From there, choose one framework from the four above and apply it to your most-stalled current decision. Pick the model that fits your decision type and use it this week.

Then schedule a decision review. Set a date two weeks out to assess what happened and adjust from there. This simple loop builds the decision-making capacity that chronic overload tends to erode.

If paralysis has become a recurring pattern rather than an occasional frustration, it may be worth exploring whether anxiety is playing a bigger role than your workflow. Persistent avoidance, difficulty tolerating uncertainty, and chronic second-guessing are all patterns that respond well to evidence-based mental health care.

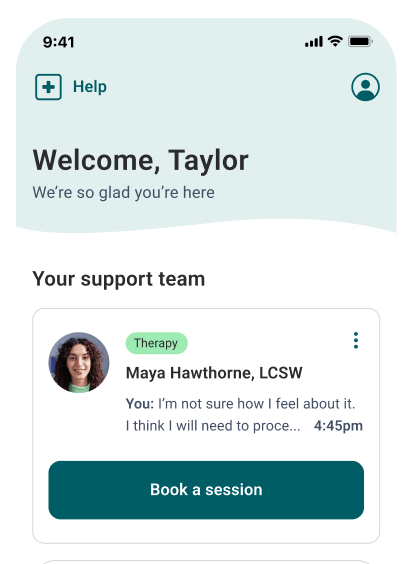

A licensed therapist at Talkspace can help you work through the underlying thought patterns that make complexity feel paralyzing. Online therapy is available via message-based therapy, live video sessions, and audio sessions, so you can access care in the format that fits your schedule. Visit Talkspace to get started.

Frequently Asked Questions (FAQs)

Can AI itself suffer from decision paralysis?

No, AI cannot suffer from decision paralysis. AI generates outputs based on probabilities and does not experience uncertainty like humans. What appears as "indecision" is due to model design or prompt quality, not cognitive overload. The paralysis is experienced by the person evaluating the output, not the AI.

Is analysis paralysis the same as decision fatigue?

No, analysis paralysis and decision fatigue are related but distinct. Decision fatigue occurs when the quality of decisions declines after making many choices in a row, while analysis paralysis happens when someone is unable to decide at all, often due to too many options or excessive uncertainty. Both can occur in high-pressure AI environments, but they require different solutions.

How do I choose the right AI tool among dozens?

To choose the right AI tool, score each option based on impact and effort using your team's use case. Eliminate duplicates, then run a 2-week pilot with your top 1 or 2 choices before deciding.

What role does data quality play in decision paralysis?

Data quality plays a vital role in decision paralysis. Poor or incomplete data can increase uncertainty, making it harder to make informed decisions, while high-quality, reliable data helps streamline the decision-making process and reduce confusion.

How often should decision frameworks be revisited?

Decision frameworks should be revisited regularly, ideally every 6 to 12 months, or whenever there are significant changes in goals, resources, or external factors. This ensures they remain relevant and effective in guiding decisions.

Sources:

- Walton D, Project on Workforce Team. The generative AI adoption tracker. Harvard Kennedy School Project on Workforce. https://pw.hks.harvard.edu/post/the-generative-ai-adoption-tracker. 2025 Nov 13. Accessed March 05, 2026.

- Zeike, S., Choi, KE., Lindert, L., Pfaff, H. Managers' well-being in the digital era: Is it associated with perceived choice overload and pressure from digitalization? An exploratory study. International Journal of Environmental Research and Public Health. https://pmc.ncbi.nlm.nih.gov/articles/PMC6572357/. 2019 May 17; 16(10): 1746. Accessed March 05, 2026.

- Evgenia Gkintoni, Paula Suárez Ortiz. Neuropsychology of generalized anxiety disorder in clinical setting: a systematic evaluation. Healthcare. https://pmc.ncbi.nlm.nih.gov/articles/PMC10486954/. 2023;11(16):2370. Accessed March 05, 2026.

Talkspace articles are written by experienced mental health-wellness contributors; they are grounded in scientific research and evidence-based practices. Articles are extensively reviewed by our team of clinical experts (therapists and psychiatrists of various specialties) to ensure content is accurate and on par with current industry standards.

Our goal at Talkspace is to provide the most up-to-date, valuable, and objective information on mental health-related topics in order to help readers make informed decisions.

Articles contain trusted third-party sources that are either directly linked to in the text or listed at the bottom to take readers directly to the source.

%20(2)%20(1).jpg)